Interview with Athena Vakali, Prof. at the Aristotle University, School of Informatics and head of Datalab

Since 2006, Twitter has become a kind of aggregator of information. But Twitter is likely never going to be the same again. Elon Musk has already implemented a raft of changes like dismissing half of the workforce, cutting off the content curation team and monetizing the verified blue tick. He also said he has the key to curbing twitter trolls and bots. To share more light into this we had a discussion with Athena Vakali, Prof. at the Aristotle University, School of Informatics and head of the Laboratory on Data and Web Science (Datalab) about bots, large-scale automation and Artificial Intelligence.

Q: Can you please tell us a few words about you and the Data & Web Science Laboratory?

Athena: Data and Web Science Lab (Datalab) is a research laboratory at the School of Informatics, Aristotle University of Thessaloniki, with extensive expertise in the areas of big data management, data mining and knowledge detection, human-centric machine learning and AI-based analytics. The Datalab team is engaged in high quality research and development activities in the areas of ICT technologies, cross-discipline Data and Web intelligent solutions, with additional interest on multi-domain synergies. Datalab team has designed and implemented innovative solutions with implementations in several sectors such as in health and sensing data analytics, the energy sector, smart cities, cloud computing, and collective awareness platforms. In the last 5 years, Datalab has been actively involved in more than 20 research and innovation actions under various EU, international and national funding programs. Moreover, in the last years, our lab has been particularly focusing on the online social media domain, where we analyze user networks and their behaviors, and phenomena like information diffusion, disinformation, political polarization, etc.

Q: One of the tools that the Laboratory has developed is called Bot-Detective. Can you please explain how it works and maybe start by explaining the facts of what we call a bot, since the term is often misunderstood, especially nowadays?

Athena: Bot-detective is a tool, available publicly as an online web service, that can detect bot accounts on Twitter. A Twitter bot is a type of bot, or automated software, that is designed to control a Twitter account and automatically post tweets, retweets or interact with other users on the platform. These bots are often used to perform repetitive tasks, such as posting a large number of tweets in a short amount of time, retweeting specific content or posts made by specific accounts, or following a large number of users. Some Twitter bots are designed to provide useful information or services, while others may be used for spamming or other malicious purposes (e.g., political propaganda). The use of bots is not prohibited by Twitter, as long as they follow a certain set of rules. It is not always easy to identify a bot, since some of them are more sophisticated and mimic human behavior, in an effort to remain undetected by Twitter’s algorithms for bot detection.

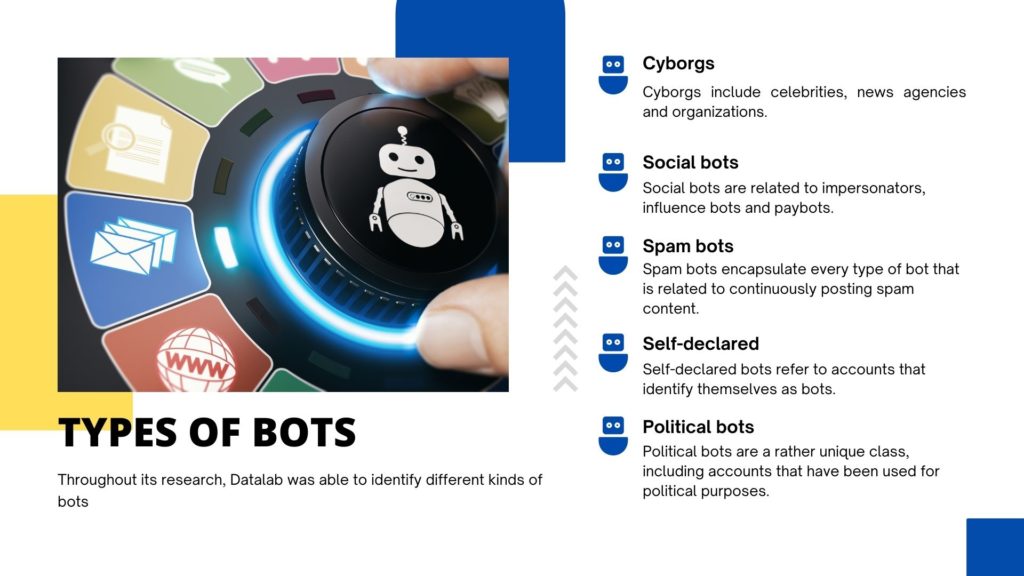

In this context, we have introduced Bot-Detective, which is able to identify bot accounts using a Machine Learning – AI model. Bot-Detective checks the activity of an account and identifies its similarity to the activity of human or bot accounts. Thus, Bot-detective can tell if an account is more probable to be a human or a bot; in fact, its inference is not binary (bot or not), but it classifies accounts to different types of bots. Throughout our research we were able to identify different kinds of bots, namely:

- Cyborgs: Cyborgs include celebrities, news agencies and organizations.

- Social bots: Social bots are related to impersonators, influence bots and paybots.

- Spam bots: Spam bots encapsulate every type of bot that is related to continuously posting spam content.

- Self-declared: Self-declared bots refer to accounts that identify themselves as bots.

- Political bots: Political bots are a rather unique class, including accounts that have been used for political purposes.

The Machine Learning model that is used by our tool has been trained on publicly available labeled Twitter bot datasets, that have been previously published in the research community. Bot-Detective adopts a novel explainable bot-detection approach, which, to the best of our knowledge, is the first one to offer interpretable, responsible, and AI driven bot identification in Twitter. In other words, we offer detailed explanations on the reasons/features that pushed our model to identify a specific account as bot.

Q: Bad vs good bots: some bots are malicious (e.g., inflating follower counts, pushing polarizing messages, confusing users), but other add value to the platform by contributing informational or creative content. Can you tell us more about that difference, given that bots accounted for more than 42% of global website traffic in 2021 and knowing that Artificial Intelligence and Machine Learning will improve even more the performance of bots?

Athena: Indeed, Twitter bots can be designed to provide useful information or services. For example, professional accounts of media or news organizations may employ Twitter bots to reproduce the content from their websites to Twitter. This is an automated procedure, and the respective accounts are frequently officially registered as bots in Twitter. Typically, these accounts are classified as “cyborgs” by our tool Bot-detective. It should be noted that such bot behavior is legitimate, and does not harm any individual.

On the other hand, there are bot accounts (individual accounts or coordinated groups of accounts), which automatically post content that may affect the behavior of other users and the society. For example, several actions of bots can manipulate cryptocurrency prices by impacting an average user who may see a large number of Twitter accounts heavily tweeting/retweeting positively/negatively about a cryptocurrency. Such users may be misguided to buy/sell cryptocurrencies, without knowing or realizing that the content posted is orchestrated by automated bot actions.

There are many other examples of such “malicious” bot activities in Twitter: from hate speech to misinformation about health issues (covid-19, vaccination, etc.) or political propaganda, etc. Of course, these above are only the up to now known incidents. With the advent of AI (and natural language processing, NLP), it becomes easier for a bot to generate original content on Twitter (rather than just retweeting content of others) that is indistinguishable from human content. Such content is expected to enlarge the challenges in bots detection algorithms and services.

Q: What is platform manipulation and how are conversations on Twitter amplified by Artificial Intelligence? Will we end up sifting through content generated by AI and bots?

Athena: Platform manipulation is the act of using social media platforms, like Twitter, to spread misleading or false information or to otherwise manipulate public opinion. This can be done by individuals or groups for various reasons, such as to influence political events, to damage the reputation of a person or organization, or for financial profits. For example, bots which adopt AI techniques to generate texts, images or even videos can be used for such purposes. These bots can be used to “shape” a particular viewpoint or idea to appear more popular than it actually is, influencing the opinions of other users and increasing manipulated content visibility. People’s ability to distinguish between genuine conversations and those that have been artificially amplified, becomes harder and harder. Recent research [1,2,3] has proven that humans seem unable to identify automatically AI generated text (their accuracy ranges from 50-55%), i.e., it is actually a random guessing process to detect a bot.

Thus, as AI technology continues to advance, it is likely that more and more content will be generated by AI powered bots, making it more difficult for people to identify genuine and artificial content. Of course, this is where social media platforms need to intervene and provide mechanisms to prevent such phenomena (e.g., by mapping accounts to specific verified individuals). On the other hand, we need to take under consideration the following: (i) Even these automated accounts (let’s call them “puppets”) need an actual person (a master) in charge to operate them and (ii) current AI tools are not that easy to be operated by non-ICT individuals, but rather from people with at least intermediate knowledge of code programming. In conclusion, it is indeed possible that AI-dependent bot content generation will be extended, but human intervention will still be necessary and platforms will need to apply more strict, robust, and more efficient countermeasures against these actions.

Q: Twitter is still using automated content moderation tools and third-party contractors to prevent the spread of misinformation and inflammatory posts while Twitter employees review high-profile violations. Can we rely purely on automation?

Athena: On one hand automation is necessary, since the volume of information and number of accounts is huge and its manual reviewing is not possible. On the other hand, automated content moderation tools cannot always be correct or accurate (e.g., tools based on machine learning almost never achieve 100% accuracy). In particular, for Twitter bots, a well-known tool (Botometer) has been reported to be 84% accurate, while our tool (Bot-detective) is 92% accurate. No matter how much we can improve our methods, purely automated content moderation can lead to mistakes. The human-in-the-loop approach, where humans check the AI results before proceeding to moderation, is a potential solution. To this end, we believe that it is necessary that AI solutions also provide explanations about their decisions, which could further help humans to take important and trusted decisions. That is the main goal since our Bot-detective tool, does not only classify an account as bot or human, but it also provides a series of additional information, namely, (i) the type of bots (e.g., “cyborgs” are typically “good bots” and thus different from “spam bots” which are “bad bots”), (ii) account’s classification as bot is done with a strong confidence based on similarities/probabilities strength (e.g., 90% similarity indicates a strong confidence, while 51% a very weak inference”), and (iii) explanations of the characteristics that lead the AI model to characterize an account as a bot (e.g., due to the content it generates or due to its network or due to its activity).

About Athena Vakali

Athena is a professor at the School of Informatics, Aristotle University, Greece, where she leads the Laboratory on Data and Web science (Datalab). She holds a PhD degree in Informatics (Aristotle University), a MSc degree in Computer Science (Purdue University, USA), and BSc degree in Mathematics. Her current research interests include Data Science topics with emphasis on big data and online social networks mining and analytics, Next generation Internet applications and sensing analytics, and on online sources data management on the cloud, the edge and decentralized settings. She has supervised 12 completed PhD theses and she has been awarded for her educational and research work which is extended with mentoring and students’ empowerment (ACM, ACMW). Prof. Vakali has published over than 190 papers in refereed journals and Conferences and she is Associated Editor in ACM Computing Surveys Journal, in the editorial board of the “Computers & Electrical Engineering” Journal, and ICST Transactions on Social Informatics (her publications received over 9590 citations with hindex=41 according to gscholar). She has coordinated and participated in more than 25 research projects in EU FP7, H2020, international and national projects. She has served as a member in the EU Steering Committee for the Future Internet Assembly (2012-14) and she has been appointed as Director of the Graduate Program in Informatics, Aristotle University (2014-15). She has co-chaired major Conferences Program Committees such as: ACM Gender Equality Summit (Greek Chapter) 2022, PC co-chair at the ACM/IEEE Web Intelligence Conference 2019, the EU Network of Excellence 2nd Internet Science Conference (EINS 2015), 15th Web Information Systems Engineering (WISE 2014), 5th International Conference on Model & Data Engineering (MEDI 2015), etc. She has also served as Workshops co-chair and has been a member to numerous international conferences and Workshops.

You can reach Athena via email or follow Datalab on Facebook, Twitter and LinkedIn.

References

- Fagni, Tiziano, et al. “TweepFake: About detecting deepfake tweets.” Plos one 16.5 (2021): e0251415.

- Radford, Alec, et al. “Language models are unsupervised multitask learners.” OpenAI blog 1.8 (2019): 9.

- Adelani, David Ifeoluwa, et al. “Generating sentiment-preserving fake online reviews using neural language models and their human-and machine-based detection.” International Conference on Advanced Information Networking and Applications. Springer, Cham, 2020.