PaladorScheduler

A job scheduler for large scale data processing (more info at datascouting.com).

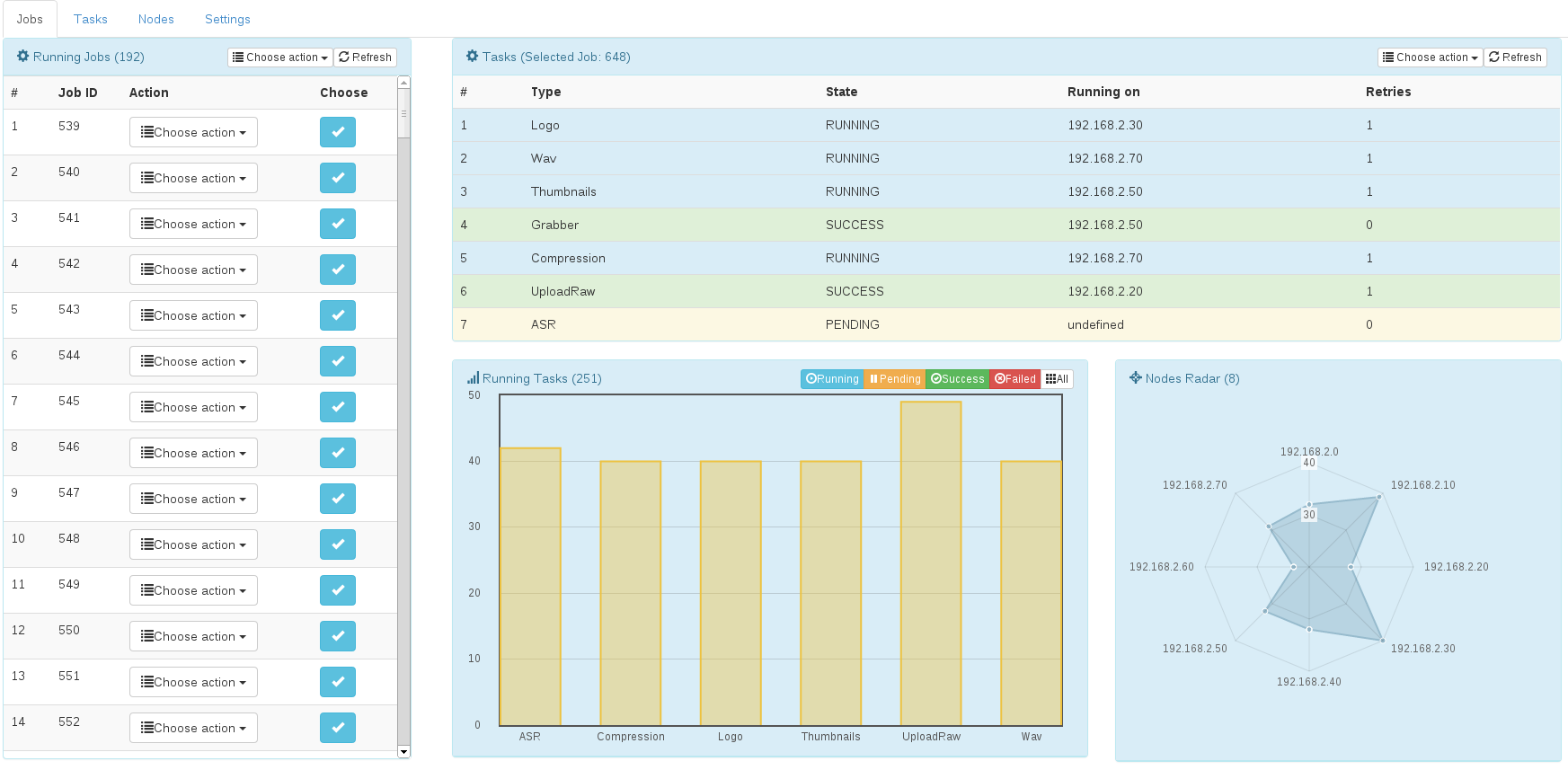

DataScouting PaladorScheduler is a software ecosystem for scheduling, distributing, processing and managing enterprise workloads and large volumes of data in real time. PaladorScheduler is highly available and can scale out by default using load balancing and locality features included in products such Hadoop HDFS. It can easily integrate with enterprise and legacy systems enriching them with modern management and processing trends and it gives the user the ability to monitor and manage the whole enterprise cluster through a responsive web interface.

Key features:

- Easy to use programming interface

- Advanced scheduling and prioritization

- Compliance with reactive traits

- Platform independence

- Monitoring and visualization through web interface

Program the logic not the software

Create chains and trees of dependent tasks using simple and intuitive programming paradigms in Java. Integrate with any legacy and monolithic code or third party tool / library. Use the provided abstraction layer and avoid the low level programming hassles such as concurrency and data security.

Schedule and prioritize based on your needs

Select from a variety of scheduling algorithms and prioritization techniques. Create tasks and assign scheduling policies on them based on the related data locality, the infrastructure setup and load and much more. Generate queues with priorities and retry policy restrictions.

Compliance with reactive design patterns

Scale out whenever needed. Assign cluster leaders and standby leaders for an 24/7 high availability and self healing.

Compile once run everywhere

Generate a cluster that is not restricted by the hardware limitations and does not require hardware/software homogeneity to run.

Web UI Access

Monitor the health status of your cluster to identify key bottleneck issues and nodes that need replacement.

Architecture

Tasks

The smallest unit of work in PaladorScheduler is the Task. A Task is an object that performs a well defined small piece of work. A Task has prerequisites and can be a prerequisite itself for one or more tasks to follow. Each task has a set of rules or policies that define when it runs and where it runs.

Attributes that determining when a task runs:

- It’s priority level

- The queue size it belongs in

- The allowed number of retries

- The retrying frequency

Attributes that determine where a task runs:

- Associated data locality

- Random factor

- Cluster node load

- Cluster node queue size

- Node restrictions

Jobs

A collection of tasks that perform a set of small pieces of work to achieve an end result is called a Job. You can visualize a Job as a tree or a chain of tasks. The job begins with the root task of the tree. The job ends when all leaflets of the tree are completed. Tree nodes that are on the same level execute in parallel and tree nodes of different level but same branch form a prerequisite hierarchy that executes in sequence. Jobs are not defined by the user. They are automatically generated and uniquely identified by the types and sequence of tasks they are formed by.

Queues

Tasks of the same type can be put in a node-local or cluster-aware queue to pose restrictions on the maximum number of parallel tasks of the same type being executed. A cluster-aware and a node-local queue for each Task type can be enabled and configured by the user.

Nodes

Nodes represent physical or virtual machines or even instances that participate in the cluster. Each node is identified by the IP:PORT couple. A user can run as many nodes as he likes per machine providing the port for each node is different. Nodes can have three roles.

- Leaders – Are aware of the cluster status and topology and can assume leadership and coordination of the cluster.

- Masters – Are aware of the cluster status and topology but cannot assume leadership and coordination of the cluster.

- Workers – Are not aware of the cluster status. They can only receive tasks and respond back to sender with the execution result.